Autopilot is a new mode of operation for Google Kubernetes Engine (GKE) where compute capacity is dynamically provisioned based on your pod’s requirements. Among other innovations, it essentially functions as a fully automatic cluster autoscaler.

Update: GKE now has an official guide for provisioning spare capacity.

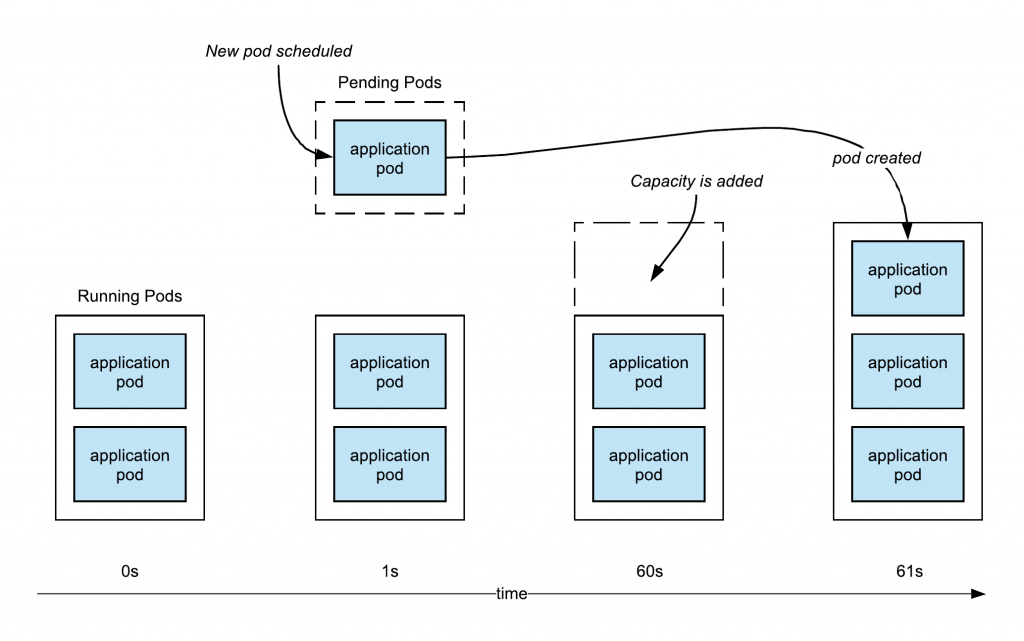

When you deploy a new pod in this environment, sometimes they’ll be existing spare capacity on the cluster and it will start booting right away, other times more compute capacity (nodes) will need to be added. Autopilot is quick to respond when more resources are needed, provisioning new capacity in around 60 to 80 seconds, after which the pod will boot.

A minute to add capacity is already pretty fast, but if you want to have your own capacity buffer to enable bursts of super-fast scheduling (where new pods can start booting immediately), there is a solution.

The following excerpt from my book Kubernetes Quickly demonstrates how to add spare capacity so that newly scheduled Pods can be booted rapidly while more capacity is provisioned.

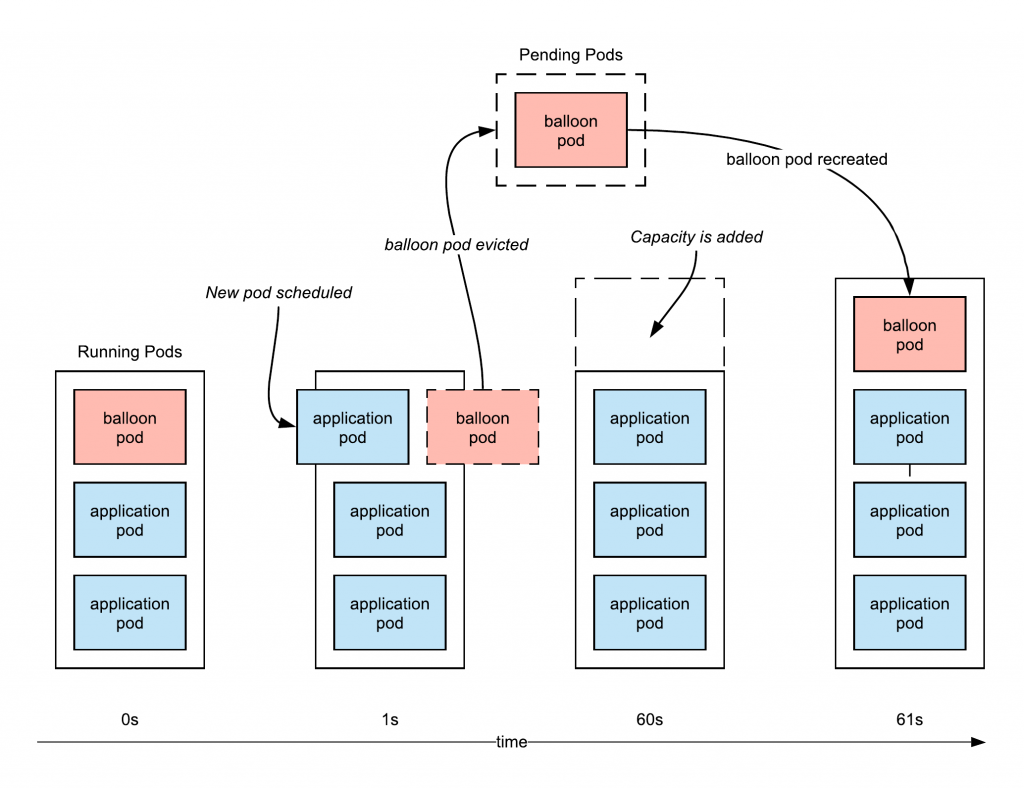

How it works is that you schedule a low priority placeholder (a.k.a “balloon”) pod with the extra capacity you desire (for example, 3vCPU). This Pod is set to a very low priority, so that when other workloads are scheduled, this pod will be evicted so the new pod can start booting right away. The placeholder pod is also then re-scheduled in a Pending state, so that Autopilot (or your cluster autoscaler) will provision new node, attempting to always provide the spare capacity that you requested.

To create our placeholder Pod deployment, first we’ll need a PriorityClass. This priority class should have the lowest possible priority (we want every other priority class to preempt it).

⚠️ Note: GKE has an undocumented limit for it’s autoscaling where priorities lower than -10 won’t trigger an upscale. So here, we’re using a still-low -10 priority, rather than the lowest possible value of -2147483648.

apiVersion: scheduling.k8s.io/v1

kind: PriorityClass

metadata:

name: placeholder-priority

value: -10

preemptionPolicy: Never

globalDefault: false

description: "Placeholder Pod priority."

Code language: YAML (yaml)Now we can create our placeholder, “do nothing” container deployment. Importantly, it uses the placeholder (low) priority, and has a zero second termination period (terminates immediately).

apiVersion: apps/v1

kind: Deployment

metadata:

name: placeholder

spec:

replicas: 10

selector:

matchLabels:

pod: placeholder-pod

template:

metadata:

labels:

pod: placeholder-pod

spec:

priorityClassName: placeholder-priority

terminationGracePeriodSeconds: 0

containers:

- name: ubuntu

image: ubuntu

command: ["sleep"]

args: ["infinity"]

resources:

requests:

cpu: 200m

memory: 250Mi

Code language: YAML (yaml)When creating this yourself, consider the number of replicas you need, the size (memory and CPU requests) of each replica. The size should be at least the size of your largest regular Pod (otherwise, your workload may not fit in the space when the placeholder Pod is preempted). At the same time, don’t increase the size too much—it would be better to use more replicas, than replicas that are much larger than your standard workloads Pods if you wish to reserve extra capacity.

For these placeholder Pods to be preempted by other Pods that you schedule, those Pods will need to have a priority class that both has a higher value, and not have a preemptionPolicy of Never. Fortunately, the default priority class has a value of 0, and a preemptionPolicy of PreemptLowerPriority, so by default all other Pods will displace our placeholder Pod.

To represent the Kubernetes default as it’s own priority class, it would look like the following. As you don’t actually need to change the default, I wouldn’t bother configuring this. But if you’re creating your own priority classes, you can use this as the reference (just don’t set globalDefault to true, unless that’s what you really intend). Once again: for the placeholder Pod preemption to work, be sure not to set preemptionPolicy to Never.

apiVersion: scheduling.k8s.io/v1

kind: PriorityClass

metadata:

name: default-priority

value: 0

preemptionPolicy: PreemptLowerPriority

globalDefault: true

description: "The global default priority. Will preempt the placeholder Pods."

Code language: YAML (yaml)This reserved capacity is not free of course, the placeholder Pod counts just like any other for Autopilot billing. So consider the trade-off of the added cost, with the ability to scale with higher responsiveness.

This article is bonus material to supplement my book Kubernetes for Developers.

1 comment

Comments are closed.